What to upgrade, what to upgrade...

Publication date: 27 August 2004 Last modified 03-Dec-2011.

I don't know if I can even clearly express the weirdness of this situation.

I've got a PC that's 15 months old now.

And it's still... fine.

This computer was a top-flight performer when it was new, and today it... look, I know how this sounds, but it still pretty much is up there with the best.

All it seems to lack is 3D video performance, compared with the current New Hotness systems.

What the heck is going on?

Can it be true that all I need do to bring myself back within 10% of the cutting edge is swap in a new graphics card?

That can't be right.

Every PC enthusiast knows that a one-year-old PC that was high-spec when it was new may, objectively, still be a perfectly OK system for pretty much any purpose. But it'll be unquestionably beaten by today's high-spec systems. It's a rule of nature.

If you feel like less of a man/woman/Supreme Omnipotent Being when your computer is not actually, as your T-shirt says, bigger, better and faster than everyone else's, then such a state of affairs is, of course, intolerable. The cure is a new graphics card and CPU, at the very least. Probably also a new motherboard, new RAM, and ooh, one of those new 400Gb drives too, thanks, and it'd be a shame not to update your monitor as well, wouldn't it, and after all that then you might as well update the case and power supply, and how have you lived so long without a dual layer DVD burner...

You get the picture.

Which is why I'm confused, now.

Way back when the world was young and the atmosphere had no oxygen in it (May, 2003) I got myself a P4 2.4C, the then-new 800MHz FSB budget-priced Pentium 4 whose stock speed was an acceptable 2.4GHz, but which was pretty much guaranteed to overclock to three gigahertz and beyond.

For a while I ran it at 3.2GHz, then at 3GHz when I suspected that the higher speed was causing the occasional crash. I now ascribe those crashes to evil goblins, but still run at only 3.1GHz, so as not to tempt fate.

The P4 is not a fast processor, in computrons-per-megahertz terms. A 3GHz Pentium III, if such a thing could be made to work without the use of liquid helium, would handily beat a 3GHz P4 for any task you care to name. But a 3GHz-plus P4 is, nonetheless, not a slow processor for any desktop computing application. And, surprisingly enough, nobody seems to have raised the CPU-speed bar all that much over the last year and a half.

The fastest easily available P4s right have a stock speed of 3.4GHz. That's a big six and a half per cent faster than a 3.1GHz chip. Less, actually, since they use the newer Prescott core, which has more cache than the older Northwood (used by my 2.4C) but, clock for clock, is slower.

There seems to be a pretty reasonable chance that you'll be able to get 3.7GHz, or maybe even more, out of a current Prescott core, and you don't need to buy a 3.4GHz one to do it.

That's a good 20% more clock speed than you can wring out of an old 2.4C like mine, but the Prescott performance penalty, and the fact that few PC tasks are solely limited by the CPU speed, means that you'd be lucky to see more than 10% better speed for most real world tasks over a mere Northwood at 3.1GHz.

There's a rule of thumb that says a 10% improvement is the least that you're going to be able to notice without using a stopwatch, so clearly the current top-end P4s aren't offering anything much that older Northwoods didn't.

Soon, if you've got money to burn, you'll be able to buy P4s with a stock speed of 3.8GHz. They'll only come in the LGA 775 package, though, so you'll need to buy plenty of other hardware on top of your $AU1100-plus CPU - and I wouldn't lay good odds on the new chips having much overclocking headroom.

Intel are, of course, not the only game in town for performance PC CPUs. The kids these days are all excited about AMD's 64 bit chips.

The "AMD64" instruction set (quietly adopted by Intel, who called it EM64T in hopes that nobody would notice...) isn't a selling point for normal users yet, because the 64 bit extensions don't do anything unless you're running a 64 bit operating system, which you very probably aren't. Heck, most Opteron buyers aren't running a 64 bit OS on their expensive multiprocessor systems. The real selling point for these things is that, unlike some earlier 64 bit chips, they're great performers for ordinary present-day 32 bit computing.

It used to be expensive to get into AMD64, but now there are low end Athlon 64s that're practically budget processors. You're still looking at more than a thousand Australian dollars for a 2.4GHz Athlon 64 3800+, and a few hundred more if nothing but an A64 FX-53 will do for you - that's also 2.4GHz, but with twice the RAM bandwidth.

But this will only cost you $AU269.50 delivered, from Aus PC Market here in Australia. US prices start around the $US150 mark, as I write this.

This A64's so cheap because it's the very slowest model, the 1.8GHz "2800+" (Aussie shoppers can click here to order one). It uses the updated "Newcastle" core, which has only half a megabyte of Level 2 cache, versus the megabyte of the original "Clawhammer" core, which is still used by the expensive FX chips. The Newcastle core debuted when AMD filled out the bottom of the A64 range with these newer, cheaper chips, and now it's migrating up through the more expensive chips too, because it can run faster and cooler and has little-to-no real performance penalty compared with the older, cachier core.

All of the cheap(er) A64s fit into a 754 pin socket. Newer A64 FXes use Socket 939; older ones use Socket 940, as does the Opteron. The extra pins enable the more expensive chips to address their second RAM channel - AMD64 processors have memory controllers that're built into the CPU. Socket 754 chips can only access a single channel of plain DDR400 RAM, but that's fine for most desktop computer tasks. Monster RAM bandwidth can be a very handy thing to have for some activities, but most home and office computers seldom or never have to do any of those things. 3D games are about the most demanding software most PCs regularly run, and most of the excitement then is restricted to the memory on the video card, not the system RAM.

The current Socket 754 Newcastle-core Athlon 64 range includes the 2800+, 3000+, 3200+ and 3400+, running at 1.8, 2, 2.2 and 2.4GHz, respectively. At stock speed, the 2800+ and 3000+ offer about the same megahertz per dollar (well, if you want to be picky, at Aus PC Market's current prices the 3000+ is actually about 3% better value than the 2800+...). The faster chips are, as always, lousier value. You'll pay about 18% more per stock-speed megahertz for a 3200+ as for a 3000+; the 3400+ value markup over the 3000+ is almost 40%. The 3400+ has only 1.2 times the stock clock speed of the 3000+, so you really might as well get the cheaper chip.

I, however, chose the humble 2800+ for my upgrading experiment.

I already knew that upgrading to a faster Pentium 4, even if I traded in my motherboard and RAM along with my CPU, wouldn't give me a whole lot more system performance. As explained above, there just aren't very much faster P4s available yet.

So I wanted to see whether upgrading to a modest Athlon 64 would make much of a difference to my computing experience. Or whether I should just plug a new video card into my old computer and have done with it.

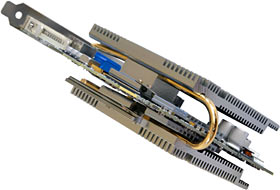

Since I built the overclocked 2.4C machine, my video adapter has been this imposing chunk of technology.

It's Sapphire's Radeon 9700 Pro Ultimate Edition, and it's kitted out with a massive Zalman heat pipe cooling solution that allows it to run completely silently. It's nice to have a video card that contributes nothing to the noise of your PC, and it's also nice to know that it'll never start emitting the distinctive buzz that means it's time for you to try to oil a tiny fan bearing, or to rip the little custom fan off the card altogether and shoehorn something else on.

The heat pipe cooler doesn't do this card any favours in the overclocking department, but it's a rare Radeon 9x00 that has much real overclocking headroom anyway, no matter what cooling you use.

Any Radeon 9x00 (where x is greater than 4) is still a perfectly all right video adapter for most games, but there are two things that're making a lot of people upgrade to newer ATI and Nvidia boards.

One: The new generation cards are substantially faster. The Radeon X800 is the king for most current games; the GeForce 6800 works better for software that uses DirectX 9 features. Both of them trample the previous generation of Radeons and GeForces.

Two: Doom 3. And the upcoming Half-Life 2, but mainly Doom 3.

The Doom 3 engine scales very well. Turn down the prettiness somewhat (from "holy snapping duck-crap" to merely "amazing") and settle for a modest resolution and various older cards will give you a smooth gaming experience. But if you want high resolutions and prettiness settings, you gotta get a current-generation video card.

Hence, this.

In keeping with my cheaper-gear-only policy, this is not a Radeon X800 or a GeForce 6800 Ultra. It's a plain non-Ultra, non-GT, somewhat cut-down GeForce 6800 from Albatron, with a won't-impress-your-friends 128Mb of RAM.

The plain 6800's specs aren't that much worse than the higher-spec GT and Ultra boards. It's got three-quarters of the rendering pipelines, five-sixths of the vertex shaders, and stock core and RAM clock speeds that're 84% and 64% of the Ultra's figures, respectively. It manages 96% and 70% of the GT's clock speeds (the only difference between the GT and the Ultra is clock speed).

The more expensive 6800s are distinctly better than the plain model, but they're not enough better to justify their extra cost, if you ask me.

The Albatron 6800 sells for $AU550 delivered from Aus PC Market (keen Aussie shoppers can click here to order one). 256Mb GeForce 6800 GT and Radeon X800 Pro cards are at least $AU760. Go for gold with an X800 XT or 6800 Ultra and you'll be dropping around a thousand Aussie dollars on your graphics card alone. Almost twice the money; certainly not almost twice the performance.

If you settle for a humble Albatron 6800, you still get a non-trivial amount of hardware for your money. Thanks to a copper heat sink, the Albatron 6800 weighs in at about 534 grams (18.8oz). That's only a hair less than the giant 558-gram Sapphire Radeon, whose bulkier heat sink is mainly aluminium.

I needed a motherboard for the Athlon 64, of course...

...so I picked this one. It's Gigabyte's GA-K8NS, and it sells for only $AU176 delivered from Aus PC Market.

Well, actually, it doesn't, as I write this. They're out of stock. That's probably because the K8NS is rather cheap by nForce3-250-chipset motherboard standards. Australian shoppers can click here to pre-order a K8NS (by the time you read this they may have stock again), but you might do better to order something else. An $AU250 nForce3 board that you can buy today is generally agreed to beat an $AU176 one that you can't buy for three weeks.

(I updated the Gigabyte board's BIOS before I ran the tests, by the way.)

If I were actually upgrading to the Athlon 64 I could reuse the memory from my P4 machine, but I wanted to run the boxes in parallel to do the tests, so I added a cheap and cheerful two-pack of 512Mb Geil DDR400 memory modules ($AU313.50 delivered; Aussies can click here to order it). And the core of the new test machine was complete.

(In case you're wondering, I dipped into my spare-components pile and gave the test box a Lian Li PC-V1000 case and Seasonic SS-400FB power supply. Oh, and an IBM 1391401 keyboard that a reader was kind enough to send me for free, after being forced to relinquish it by his noisy-typing-hating housemates. Nothing but the best for my test mules!)

Overclocking

My P4 was overclocked, so my A64 obviously had to be as well.

It's apparently not remarkable to be able to wind an A64 2800+ up to better than 2.2GHz (giving performance in the "3400+" or better range), but my test system only managed a miserable 1980MHz. That meant a 220MHz Front Side Bus speed - the A64 doesn't really have a FSB in the classical sense, but it does have an interface clock speed that's multiplied to establish the core speed, so FSB is a good enough term.

1980MHz core speed for an A64 is about "3000+" speed, as near as anyone can figure out.

To achieve this speed I had to boost the CPU core voltage to 1.6 volts (the Gigabyte motherboard has a perfectly adequate suite of overclocking features), from the stock 1.5V. The K8NS voltage adjustment goes up to 1.7V, but 230MHz FSB only managed to POST about half of the time even at 1.7V, and Windows never came anywhere near loading.

Somewhere between 220 and 230MHz FSB would no doubt have worked OK at 1.7V, but even if it was 229MHz, that'd still only be 4.1% more CPU speed. Chasing that last few megahertz is a waste of time; the most you're going to manage is an un-noticeable speed increase, and the price you pay is likely to be a computer that's living on the edge of a crash.

The 220MHz FSB overclock is only 1.1 times the 200MHz stock speed. This, as mentioned above, means it's unlikely to be noticeable in any real world task. It's nice to have, and it seemed to be perfectly stable even with the stock cooler in a warm room, but it's not at all worth getting excited about. Practically every CPU can manage a 10% overclock.

The 2.4GHz P4 running at 3.1GHz is, of course, almost 30% overclocked. That's more like it. Oh, it takes me back, it does.

Making numbers

I've had the distributed.net bug for more than five years, now. Since then, much worthier distributed computing projects have come along, but if I switched to one of those, I'd lose my hard-earned d.net stats, so I say to heck with finding the Centauri Republic and curing cancer.

Pentium 4s are not terribly good at any distributed.net tasks, but the d.net client still serves as an OK near-meaningless, CPU-intensive, RAM-speed-insensitive twiddle-benchmark for raw processor performance.

I expected the Athlon 64 to beat the P4 like a redheaded stepchild for d.net, and I was not very disappointed; for OGR and RC5 it won by factors of 1.42 and 1.45, respectively. Since d.net speed depends on practically nothing but raw CPU muscle, an Athlon 64 that managed to run at 2300MHz (as I'd hoped this one would) would beat a 3.1GHz P4 by a factor of about 1.7 for d.net tasks.

OK. Basic speediness of CPU established. I may be crazy, though, but I'm not nuts; I'm not going to change CPU and motherboard just to punch up my d.net scores.

No. I'm not. Really. It would be silly. Not not not.

Excuse me while I put this paper bag over my mouth. Ahem.

While we're testing subsystems in isolation, what about RAM speed?

The P4 system's got dual channel DDR400 memory; the A64's only got one channel. SiSoft Sandra's semi-random guess at my P4 system's memory bandwidth said it managed around 4925 megabytes per second. It said the overclocked Athlon managed a hair over 2700Mb/s.

If I were doing scientific computation or horrendous database tasks, I might care deeply about that. I'm not, though, so I moved on to game tests.

I fed each system my old Radeon and the new GeForce 6800, using the latest official Windows XP drivers for each. Not ATI's beta tweaked drivers, partly because of their beta-ness and partly because they don't do anything much for last-generation Radeons anyway.

I then called forth something so hideous that no monster would want to share a closet with it:

Default Excel Charts.

Sensitive readers might like to avert their eyes.

In the default benchmark from Futuremark's 3DMark03, the Athlon 64 performed marginally better with both graphics cards than the P4 (7% with the Radeon, 3% with the GeForce), but the big difference was obviously made by the graphics card upgrade. Switching from the 9700 to the 6800 increased the P4 result by a factor of 1.7, and the A64 result by a factor of 1.65.

3DMark numbers are never utterly reliable, partly because of the widely reported

problem of graphics card makers cheating optimising their drivers to

work especially well in the various 3DMark versions, and partly because the 3DMark tests

don't run quite the same every time. The bouncing barrels at the beginning of 3DMark2001

don't bounce the same way every time; the flopping trolls at the end of 3DMark03 don't

flop the same way every time. If two systems get 3DMark scores that differ by only a

few per cent, therefore, there may be no real difference at all; you have to do plenty

of runs and average the results to be sure. But a big difference like this is

definitely valid, even when you lazily do just the one run, as I did this time.

On to Doom 3, in 800 by 600 (which is as much resolution as people with Radeon 9x00s are likely to be able to comfortably use) and in 1600 by 1200 (mmmmmmm). I tested with the graphics quality set to "High", and using the single "demo1" demo that's included with the game. Doing a timedemo run like this doesn't give completely accurate gameplay figures, since things like the physics engine aren't doing anything, but the timedemo is a nicely standardised rendering-speed test.

Doom 3's very heavy texture load means that, as with Quake 2 in days of yore, you always have to run the timedemo once to get all of the textures cached, then again to get an actual number unpolluted by disk access.

The same, only more so. The difference between the two CPUs is so small as to be lost in the noise (which is, I think, why the P4 wins by a hair for the 800 by 600 GeForce 6800 test; it's experimental error), but the difference between the two graphics cards is night and day. Even at 800 by 600, the 6800 kicks the frame rate up by a factor of 1.5 on both platforms; at 1600 by 1200, it makes things a monstrous two point six times as fast.

Not bad for a one minute upgrade that costs little more than half as much as the current flagship cards, eh?

There was no point testing with older games, 3DMark flavours before the current one and so on, because everybody already knows that any old GeForce FX or Radeon 9500-or-better will give you perfectly fine performance for those, with any of a number of current and mildly elderly CPUs.

Coming clean

Actually, the first thing I did after getting the hardware for this piece was not set up the test and carefully run the benchmarks.

The first thing I did was whip the 9700 out of my P4 machine, pop the 6800 in, and play games.

Which, as the graphs above make clear, made a great big difference. 1600 by 1200 in Doom 3? No sweat. The 6800 can't deliver good frame rates with Doom in its "Ultra" graphics mode, but no hardware yet exists that can run that mode at a decent speed.

1600 by 1200 is a dumb resolution to use on all but the biggest CRT monitors, if you're not playing games. Most 19 inch CRT monitors can handle 1600 by 1200; some 17 inchers can, too. But "handle", here, just means "show a picture", not "show a clear picture". They just don't have enough phosphor dots for each pixel to get the usual rule-of-thumb 1.25 phosphor triads (I explain what those are in my old monitor review here).

Things are different with LCD monitors, of course. A 1600 by 1200 LCD will display that resolution with razor sharpness. But LCDs with that many pixels aren't yet in most consumers' price range - they're all big twenty inchers, because no monitor manufacturer's decided to see if people want to buy a super-resolution laptop panel in a desktop-screen housing.

So if you want a two megapixel screen for a reasonable price, you've got to go for CRT.

Now, to be honest, 21 inch CRTs can't display 1600 by 1200 very clearly either. A modern 19 inch CRT (with an 18 inch viewable diagonal) can display 1280 by 960 with decent clarity, but stepping up to a 21 incher (20 inch diagonal) will, as you'd expect, probably only give you 20/18ths as many phosphor triads. You don't automatically get smaller dot pitch along with your bigger tube.

So, all other things being equal, you can display 1422 by 1067 pixels (give or take a pixel...) on a 21 incher with the same clarity as 1280 by 960 on a 19 incher.

I've got a 21 inch CRT monitor, now; it's a humble Samsung SyncMaster 1100P+, and I quite like it.

A 1600 by 1200, 20 inch diagonal LCD screen will set you back something in the vicinity of $AU2000 at the moment. The retail price of the 1100P+ is about $AU800. That is a significant part of the reason why I quite like it.

I don't mind the 1100P+'s slightly curvy screen, and the desk bent but did not break when I heaved it up onto it. It'll do for now.

At 1600 by 1200, this monitor's clear enough. I haven't had to increase any font sizes. But I do now want to run all of my games at 1600 by 1200.

You may want to as well, even if you don't have a big screen. Pin-sharp resolving power doesn't matter nearly as much for games as for more boring software. Actually, running your monitor above its clear resolution capabilities can be a good thing for games, because it softens "jaggies" just like antialiasing, but without making the graphics card do as much work.

You have to work hard to smooth things in the digital domain. In the analogue world, you just include a bandwidth bottleneck and the universe does your smoothing for you, as every video producer who's worried about the quality of his images, then breathed a sigh of relief when he realises that it's all just going to end up on VHS with a composite display, knows.

Other chips

So a 3GHz-ish P4 CPU, or some other chip that performs much the same, would appear to be perfectly adequate for even cutting edge games, with a suitable graphics card.

What if you don't currently have such a CPU?

Well, you can of course just buy yourself a base model P4. The slowest P4 many stores stock these days is a 2.8GHz Northwood, but those are decently cheap these days; Aus PC Market have them for $AU291.50 delivered. They'll overclock to three-point-a-little-bit gigahertz, no problem; 3.1GHz is only an 11% overclock for a P4 2.8.

And then there are AMD's old Socket A chips. Most Athlon XPs are "budget" processors these days, but they still deliver excellent performance. The fastest 2.17GHz Barton-core Athlon XP 3200+ will set you back more than the price of an equivalently speedy P4 (which is not a 3.2GHz; the "3800+" actually only manages about the speed of a 2.8GHz P4), so it's not a great buy, despite the fact that Socket A motherboards are generally cheaper than Socket 478. But if you settle for a 1.92GHz Barton-core XP 2600+, you'll get almost nine-tenths as much speed as a 3200+, and pay half as much for your chip. Aus PC sell 2600+s for $AU181.50 delivered. You can't complain about that.

Intel have made a surprisingly strong showing in the budget-chip market with their new Prescott-based Celeron Ds, which are designated in their new way with three-digit model numbers instead of clock speeds.

The older P4-based Celerons paid a terrible price for the cut-down cache that made them so cheap. A 2.6GHz one had a hard time beating a 1.8GHz full P4. Now, though, the Celeron 325, 330 and 335 (at 2.53, 2.66 and 2.8GHz, respectively) cost the same as the previous models with the same clock speeds, and are up there with 2.4GHz P4s and Athlon XP 2500+s at stock speed. Whaddaya know - Prescott is good for something!

The Celeron Ds overclock well, too. If you get one that'll hit better than 3.7GHz, you'll enjoy better than 3GHz P4 performance from your "budget" chip.

AMD's answer to these new Celerons is the Sempron, which takes over the "value" end of their CPU line from the venerable Duron. The Socket A Sempron's unexciting, but the not-really-available-yet Socket 754 version's a wee ripper. Its "3100+" model number is not undeserved, it'll sell locally for $AU225.50 delivered, and it gives you an Athlon 64 upgrade path.

Overall

Would I have seen a better performance gain from the AMD CPU if I'd picked an Athlon 64 FX? Yes, I would. I could have used more expensive RAM with a cheap Athlon 64, too; the CAS 2.5 Geil sticks are quite a lot cheaper than CAS 2 DDR400, though, and they've got those nifty heat spreaders that serve no thermal purpose at all but do make it easier to handle the RAM.

Would any of this have given a gain that mattered? I don't think so.

A higher-end video card would have been nice, too, but they're very questionable value for money.

The Radeon X800 XT's a screamer, but it's difficult to find one in Australia at the moment, and if you do then you may wish you hadn't. You can, as mentioned above, pay more than $AU1000 for the fancier XTs. You can spend the same kind of money on a GeForce 6800 Ultra, if you can find one.

Or you could buy a plain 6800 like I did, and spend the $AU400 or more you save on an Athlon XP and a motherboard to put it on.

Or on a whole lot of Robitussin. Whatever.

If you want to push the boundaries with anisotropic filtering and antialiasing at high resolutions - 1280-by-whatever and above - then the plain 6800 will fall down. But if you can do without that, then even very demanding games will cruise along A-OK on a plain 6800 at 1600 by 1200. Which, I'm here to tell you, looks nice.

So - am I going to upgrade my whole PC?

Nope. My ancient, carved-from-stone, older-than-the-endoskeleton P4 can keep hobbling along on its walking frame for a while yet.

Am I keeping the GeForce 6800, though?

Oh yes.

Buy stuff!

Readers from Australia or New Zealand can purchase all kinds of computer gear

from Aus

PC Market.

(if you're NOT from Australia or New Zealand, Aus PC Market

won't deliver to you. If you're in the USA, try a price search at

DealTime!)