How fast is a hard drive? How long is a piece of string?

Publication date: 20 March 2010Originally published 2009 in Atomic: Maximum Power Computing

Last modified 03-Dec-2011.

As I write this in March 2010, the personal-computer world is on the cusp of a great transition.

Rotating magnetic disks have been the fastest way to store large amounts of digital data for, literally, decades. But they're finally being phased out. And now people are having even more trouble figuring out how you measure the speed of a drive.

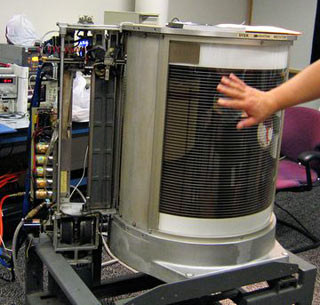

IBM's 350 Disk File came out in 1956.

It used fifty 24-inch platters to store about enough data for one JPEG photo from a current consumer digital camera.

Around 1980, the first hard drives suitable for use with home computers came out. They managed to fit a little more data on each of their platters (usually eight-inch, or 5.25-inch for the famous original ST-506) than the 350 Disk File crammed onto all fifty of its 24-inchers.

Today, it's perfectly normal for consumer hard drives to hold some one thousand four hundred gigabytes, from a sticker capacity of "1.5 terabytes". And they're cheap, too.

The days of spinning discs are numbered, though, because solid-state drives based on Flash memory are now consumer products.

If you don't need more than about 100 gigabytes of storage in your desktop PC or laptop - and laptop users, in particular, may be able to get away with much less - then the only "hard drive" you now need is an SSD. And it'll only add two or three hundred bucks (US or Australian; as I write this the two currencies are pretty close in value) to the price of your computer.

If you need lots of storage then a pure SSD solution remains economically insane. Per gigabyte, SSDs still cost an easy 25 times as much as magnetic storage. (Or much, much more, if you want a really high-capacity, extra-fast SSD.)

But there's nothing stopping you using a modest-sized SSD as your boot device, to give you all the lightning-booting joy of the SSD crowd, and putting your bulky data on one or more cheap "1.5-terabyte" hard drives.

(For most PC users, "your bulky data" includes various applications and games, whose performance will be at least somewhat limited by the speed of the drive they're on. If your system drive is an SSD, though, software installed on other drives will still perform significantly better whenever the factor limiting the speed of some task is not how fast the actual application files can be accessed, but instead a disk-hitting operating-system function.)

There's no definite line where the improved performance of SSDs outweighs their higher price. It's clear that we're getting pretty close to this line, but there's no way to be specific about it.

One reason for this is that hard drives are continuing their mind-boggling capacity climb and price drop, such that "2Tb" drives will soon be the dollars-per-gigabyte value winners. It's difficult to find a sensible economic reason to move on from a technology with that kind of price-performance curve.

Another reason is that Flash-RAM SSD technology is still young enough that there's a lot of tweaking and twiddling still going on.

The general flavour of this will be familiar to anybody who likes to live on the cutting edge of consumer computing.

Like, there's the TRIM command for SSDs, whose purpose is to prevent the peculiar low-level data allocation behaviour of Flash-RAM SSDs from making them get slower the more you use them.

TRIM is integrated into new and exciting operating systems, and can be run as a kind of "SSD defrag" with a separate program in older OSes, and it's all great. Except it will usually, if not always, prevent you from recovering any deleted data after a TRIM command has had its way with the relevant Flash memory cells.

Good old undelete programs have been saving forgetful users' bacon since a floppy drive was a pretty expensive peripheral, but they look set to fade away into the netherworld with ZMODEM and cylinder-head-sector. This is, at least, good news for the paranoid.

(And yes, automatic versioning systems like Time Machine and Previous Versions can make undeleting unnecessary. But these systems need drive space to store their data, and SSDs aren't going to have as much of that as magnetic disks for quite a while yet.)

One big reason why people can't figure out when to switch from magnetic-disk to SSD, though, is that it's not actually very easy to meaningfully measure the speed of a hard drive, or an SSD.

I wrote about reification in this very column, ninety-three issues ago. Reification is the fallacy of treating an abstraction as if it were a real thing. Like, for instance, assuming that because "IQ tests" exist, and get pretty consistent results, there must be a "general intelligence" that such tests are measuring.

There are, you see, two basic hard-drive performance specifications - latency, and transfer rate.

Latency is how long it takes for a drive to respond to a command. For a hard drive, it's the time it takes the drive to move its heads, select the right one, and wait for its platters to spin to the right position for it to start accepting or delivering data.

Modern hard drives have a random-seek access time of around ten milliseconds. An SSD has no moving parts, so its latency is much lower, though not as much lower as you might think.

Transfer rate is how fast the data moves, once the drive has gotten around to starting a read or write.

Both of these specifications are obviously the bedrock statistics on which all disk-subsystem performance is built.

But you shouldn't worry about either of them.

Computer enthusiasts love benchmarks. It's like putting your car on a dynamometer, or whipping it around an autocross track. Except computer benchmarks are often free, and don't require you to budge from your comfy chair.

You can, however, spend a lot of time benchmarking hard drives, and end up with no actual useful information at all.

Sustained transfer rate is particularly irrelevant. If you're copying big files from drive to drive then raw drive speed can make a difference; the abovementioned "2Tb" consumer drives now have sustained transfer rates of at least 60 megabytes per second - well over 100 for the faster outer tracks. If you're copying from drive to drive on a modern PC, you'll get a sizeable slice of that raw speed as actual transfer rate for the data you care about, after all of the real-world overheads and bottlenecks.

But those overheads and bottlenecks are, still, large. Multiple drives in the one computer will always give you very noticeably less than the single-drive theoretical maximum performance.

(Today's ultra-high-capacity consumer drives move so much data under the heads per second that even the few 5400RPM "green" models can saturate the actually-available bandwidth of SATA-1. You very probably really don't need more transfer rate than that; it's physically impossible for practically any normal desktop-computer task to use it. Background media-file or antivirus scans can use a ton of drive bandwidth, but they generally include a lot of seeks, which render the immense sustained transfer rate of high-density 3.5-inch drives pretty much irrelevant. An SSD with a significantly lower sustained transfer rate can complete a task like this a great deal faster, and with less effect on the system's performance for simultaneous other tasks, because of the SSD's very fast seek speed.)

For other everyday tasks - starting Windows, switching from one big application to another, doing stuff while a BitTorrent client sucks down data in the background - raw drive transfer rate can be almost completely irrelevant. The interaction of the moving parts and firmware, the motherboard data buses, OS disk management and whatever software you're running adds so many variables to the calculation that transfer rate gets lost in the noise.

(Usually, when someone's copying a giant file from one drive to another, they're not doing it from one drive to another in that one computer. Any current hard drive, and lots of old ones, will be faster than the transfer rate of USB 2 or gigabit Ethernet. If you've got a FireWire 800 external drive then the hard disk inside it may be able to influence transfer speed, a bit; likewise for USB 3.)

It's also possible to fail at the start of your journey to figure out how fast a storage device is, by picking the wrong benchmark software.

As a general rule, the easier a disk benchmark is to run, the less useful it is.

SiSoft Sandra, for instance, is very easy to use, and has drive-speed tests that're pretty much useless. (They may yet find an application as a robust source of random numbers.)

People who think they're original nerd gangstas, yo, like to use Iometer. It's free to download, and has many obscure and technical settings, which make it look all heavy-duty and serious. As, indeed, it is. But Iometer is meant to test drives for multi-user servers, which the gangstas are probably not actually building.

(See also, teenage pirates who use the ultra-expensive server versions of Windows, just because they can.)

There are several Windows drive-speed testers that're pretty easy to use, yet give better-than-random results. HD Tach used to be a decent choice, but it doesn't work right on RAID arrays, I'm not sure if it behaves itself entirely properly in Vista/Win7 yet, and it won't do write tests unless you register it. You might (or might not) prefer HD Tune, which has more features, even in the free version.

My current choice when I find myself unable to talk my way out of doing drive benchmarks is h2benchw, from the German computer magazine Computertechnik (or "c't", for short). It's a command-line utility, and the main documentation is in a language I do not understand. So it must be good.

H2benchw can do a wide variety of tests, including write tests - but because there's no good way for write benchmarks on drives that contain data to cope with that data, h2benchw will only do write tests if a drive isn't even partitioned.

H2benchw even has "application" tests, which aim to mimic things like swap-file use, Photoshop and virus scanning - you know, the sorts of things that you actually do with a drive when you're not benchmarking it. But the readme, with refreshing honesty, says the app tests are outdated and unreliable.

(I did them anyway, for this piece about SSDs from the end of 2008.)

I think the only current publicly-available benchmark package that has somewhat reliable application tests is the "HDD Test Suite" in PCMark, from what used to be called Mad Onion but is now boringly called Futuremark. You don't get the HDD Test Suite in the free version of PCMark, though. The free trial of "PCMark Vantage", the most recent version of the benchmark, lets you run the "Basic Edition" benchmark including disk tests... once. Then it's $US6.95 for a "Basic" license or $US19.95 for an "Advanced" one.

The idea of benchmarks is to let you compare the performance of different hardware. Simple transfer-rate and seek-speed numbers, if they're made by non-broken software, actually usually are pretty good for that.

They still don't mean much, though. The drive with a 40% higher sustained transfer rate probably won't be noticeably faster in normal use. People dazzled by impressive but irrelevant benchmarks can also very easily conclude that some change has made a big difference to system performance, when it hasn't actually improved anything at all.

(See also: Audiophiles.)

There's a huge difference between SSDs and hard drives, but you don't need a benchmark program to tell you that. And because consumer SSDs are still a pretty young technology, benchmarks can give weird results if they run into some odd optimisation trick. Or give exactly the same results, even after a tweak that's made an SSD work a lot better. I am far from convinced that even a top-of-the-line consumer benchmark like PCMark Vantage is really reliable, for this.

All a lot of people really seem to want from benchmarks is just the fun of doing something very technical, and then seeing some larger numbers than they'd previously seen.

If you're just using a drive, not reviewing it, though, then... just use it.

There are only three speeds it can actually have, after all: Blinding fast, tolerably fast, or too slow.

I suppose a benchmark that just told you that wouldn't sell very well.