Atomic I/O letters column #11

Originally published in Atomic: Maximum Power Computing Reprinted here 31-Jul-2002.Last modified 16-Jan-2015.

Meandering electrons

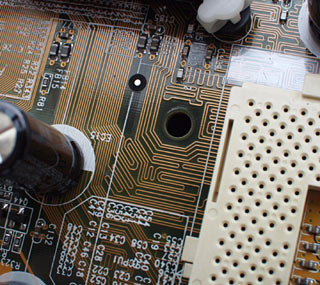

I've changed my motherboard recently, and I noticed on my new and old boards, they have curvy traces near some of the chips. I thought the traces were supposed to go directly towards the chip, not curve around like they do. Any ideas why this is so?

Chris Holzworth

Weird back-and-forth signal paths aren't there just because the motherboard

designers got bored.

Answer:

Modern PC motherboards commonly have components that talk to each other

at 100 or 133MHz. Tweaky overclockers' boards can work at Front Side Bus

speeds well above 166MHz, provided they're not let down by other components;

the cryogenic-cooling speed-record nutcases routinely hit FSB speeds above

210MHz on Athlon boards, as I write this. And yes, that means a CPU-to-chipset

speed of more than 420MHz.

Let's say you're signalling at a mere 133MHz. When 133.33 million signals are going past every second, each one lasts only 7.5 billionths of a second. In 7.5 billionths of a second, light in a vacuum only moves about 2.25 metres. Signals in a circuit board trace move at around two-thirds of the speed of light - about 1.5 metres per 133MHz clock tick. Only one metre, at 200MHz.

The problem that motherboard designers run into, here, is that it's important for multi-pin parallel data connections to be very tightly synchronised. But it's impossible to arrange various multi-pin components in such a way that the distances between the data pins that need to be connected are equal. And different signal trace lengths mean different signal delays.

Ground pins, power pins, other stuff that isn't running at some outrageous clock speed, that doesn't matter. But if you want your data to stay synchronised (and you do - the alternative is ugly performance-eating buffering) then you need to make your data traces from Component A to Component B all the same length. The way you do that is by putting extra spirals and switchbacks and curlicues in the traces that would otherwise be too short.

Trace length isn't the only factor. There are capacitance and resonance and resultant signal settle time issues as well; when you're dealing with high speed digital systems you end up fiddling with a lot of analogue variables. But if you're wondering why bits of your motherboard look like Hampton Court Maze, it's likely to be mainly for trace length reasons.

DVD-R = CD-R?!

I have seen several banners and advertisements regarding the copying of DVDs on standard CD writers.

I find this VERY hard to believe, but I'm curious whether this can be done. Considering the very high prices of DVD-R drives, simply burning DVDs on a CD-R seems too good to be true!

Am I right, or am I just in denial of a technological breakthrough?

Pujitha Fernando

Unsolicited commercial e-mail. Is there anything it can't do?

Answer:

These spamming scum-sucking uncle-rapers aren't talking about burning DVDs.

They're spammers, so they're lying thieving pig-licking toilet blockages

with feet, but even if they were honest, they'd just be talking about ripping

DVDs to one or another video CD format.

This is not a very big deal - take your DVD video stream (which is easy enough to access, since the encryption used on DVDs is famously craptacular), re-encode it with a lower bandwidth codec (like DivX) so it'll fit on a CD, write the result to the CD, done.

Recompressed DVDs can look surprisingly good considering their greatly reduced data rate, but they can't ever look as good as the original video, and they won't be a DVD either. If you convert DVD video to MPEG-1 and burn it to CD in Video CD ("White Book" format) then it'll be playable in any DVD player that works with CD-Rs and can play VCDs - but you'll need a couple of CDs per DVD. If you just convert DVD video to a big fat DivX AVI file on a plain data CD-R ("Orange Book Part II" format), though, no normal DVD player will understand it.

Oh, by the way, if any spammers are reading this - you know, guys, your continuing campaign to ask me at least once a day if I want to see girls having sex with horses, brothers having sex with sisters or grandparents having sex with grandchildren has not, yet, created in me any desire to see any of these things.

Not to worry, though. I'm sure you'll stick with it.

CPU ID roadshow

After reading your magazines I've started to get into tweaking my computer. But I have come across something that I can't seem to figure out. When I originally bought my computer (not brand name crap), it was meant to be a Pentium III 600E (Coppermine), so it should run at 133MHz FSB with a 4.5X multiplier. But when I was going to delve into a bit of overclocking I saw that in the BIOS the CPU is running at 6x100MHz. Since all Intel processors have locked multipliers, that means it will really be running at 450MHz.

So I changed the settings so it was 4.5x133MHz, and upon booting up it said it was now running at 800MHz. This would mean that the multiplier is locked at 6X, which means I don't have a 600E. It could either be an original Pentium III at 600MHz or it could be an 800E (Coppermine) Pentium III underclocked to 600MHz. I'm guessing that it would be the former and that I have been ripped off!

But is there any way that I can tell exactly what CPU I have? And what sort of overclock could I expect out of it with my MSI MS-6163 motherboard?

altan8

Answer:

A mere "P-III 600" is a pre-Coppermine-core P-III. A "600E" is a Coppermine

P-III running at 100MHz FSB, which is what you have. A 600EB is a Coppermine

running at 133MHz FSB; that's what the B on the end means. So you got what

you paid for, unless someone told you that you were getting a 600EB, or

otherwise promised you 133MHz FSB.

The real performance difference between the two bus speeds for desktop computer tasks is small enough that it's hardly worth worrying about. Core speed makes a difference; the exact combination of FSB and multiplier you use to get that core speed, generally, doesn't.

It's hard to say how overclockable your CPU is likely to be; you've just got to try it. Fiddle with different FSB speeds and see what's stable. Your CPU might be perfectly happy at 800MHz or more, especially if you goose up the core voltage a bit.

Unresourceful PC

I use a lot of fairly hefty applications simultaneously for Web design work. It is becoming increasingly frustrating to keep having to close important programs or restart Windows because I very soon become flat out of system resources, as reported by SiSoft Sandra.

I would expect that the programs I am using (Adobe, Macromedia etc products), would not be leaking resources to any significant extent. I almost always have a great deal of my 512Mb of RAM free. If it is not a memory related problem, at least in regards to the amount installed, what exactly are "system resources" and how can I upgrade/tweak my system to prevent this from happening? I am running a Duron 800.

James Cowling

Answer:

You don't specify what flavour of Windows you're using, but my infallible

psychic abilities tell me that it's something from the Windows 95 series.

That includes Win98, Win98SE, and WinME.

All of these OSes are carrying large and unseemly piles of baggage from the distant past, and "System Resources" is one of the larger suitcases.

It doesn't matter how much physical RAM you have. System Resources are different. They're little areas of memory that Windows uses for housekeeping tasks, like drawing things on the screen, remembering what windows are open, and so on.

One of the ways in which Windows 95 was a Great Leap Forward from Windows 3.11 was that it greatly increased the System Resources limits. But they remain limited, and power users can slap into those limits quite easily. Some programs need only a small share of various Resources, but other popular apps can slurp up huge chunks, comparatively speaking. When your aggregate free System Resources amount drops below about 15%, life gets weird.

Many applications, including big expensive commercial ones, "leak" System Resources; they don't release them when they're done with them, even if you quit the application. The only solution, short of adapting Groucho's medical advice and just not running that application, is to reboot a lot.

Or, of course, you can give your Win95-series OS the flick, and switch to an NT-series Windows version - Win2000 or WinXP. Neither of these Windows flavours has any System Resources limits. Which is why crappy resource-leaking software survives; if most of the users of a given heavyweight app are running an NT-series Windows version, they won't care about the software's brain damage.

4in1 for XP?

Does Windows XP, when you install it, automatically install Via 4in1 drivers for motherboards that need them? I asked a computer support person at a local store and he seemed to think XP did it all - but he was very unconvincing.

Mark Woods

Answer:

Via's regularly updated motherboard driver installer (you can download the

latest version from here)

is, of late, quite a well-behaved little critter. It installs what's needed,

and doesn't install what isn't, on any Via chipset motherboard.

When Windows XP first came out, it did indeed have built-in drivers for Via-chipset motherboards that were as good as anything the 4in1 installer of the time could give you. Which was a nice change. Every Windows flavour before WinXP needs the 4in1 drivers to be installed after the OS, or you'll be putting up with strangely slow performance from basic compatibility-mode drivers.

There have been a few updates to the 4in1 driver set since WinXP was released, though. The built-in WinXP drivers are still perfectly OK, but the updated Via ones are a little better. So you should use the Via installer.

Poor choice of cards

I have a Pentium III 1GHz with a GeForce 2 MX graphics card in the AGP slot. I decided to add a secondary video adapter and monitor - a PCI S3 Trio64 card and an old 15" monitor.

I hooked it all up and when I turned the computer on, to my dismay I found that Windows 98 decided that the crappy PCI slot card should be the default adapter. This is obviously not what I wanted and so I set out to remedy it, first looking to the control panel. This gave no answers even after clicking every single option, and waiting for the computer to restart.

So I decided to install the cards in a different order, that is the PCI card first, and then the AGP card, and see if this made any difference. Again this did absolutely nothing and the Trio64 was still the primary adapter.

In desperation I turned to the Windows help file (god help me) and after a little searching found a page which told me to switch the monitors around to get the right adapter with the right monitor. I WANT TO GET THE PRIMARY MONITOR TO BE MY GEFORCE 2!

Does this happen because the adapters are incompatible in some way?

David Williams

Answer:

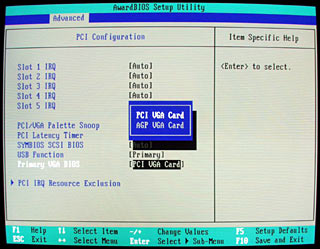

It's not Windows' fault; it's the BIOS.

Many motherboards let you pick whether to make an AGP or PCI graphics

card the primary adapter.

Go into the BIOS setup (by pressing Delete on startup, probably, unless you've got a brand-name machine that insists on doing it some other way) and look for an option vaguely resembling the one in the picture above. If you can find a "Primary VGA BIOS" or "Video Initialisation Order" sort of option and switch it from the default "PCI" to "AGP", you're in business. If there's no such option, then it's possible that a BIOS update will help, but it's probable that it won't. There are various BIOSes that don't allow this option to be changed, and are stuck on PCI-first.

If that's the case, then you can get a new motherboard, or get a better PCI graphics card, or (more elegantly) get a dual-output graphics card, like a TwinView GeForce2 MX.

Where should I stick this sensor?

A quick question regarding a Macpower DigitalDoc5 and its sensors.

I have recently bought a whole new PC and I am planning where to put the thermal sensors from the Digidoc5 for temperature monitoring. When I read through the manual, it specifically says not to put the flat thermal sensor between the processor plate and the heatsink because it interferes with the transfer of heat from one to the other, even though it is the best place for monitoring the temperature of the CPU.

What is your opinion on this? Are Macpower just being over-cautious by saying it interferes with the heat transfer?

Chris Kerin

This sensor is fat. Really fat.

Answer:

Macpower are right to tell you not to put anything but a thin layer of thermal

transfer compound between your CPU and its heat sink. The shiny raised contact

patch area in the middle of your processor - which is the part that actually

touches the heat sink metal, and through which the CPU gets rid of practically

all of its heat - is very flat. So, with any luck, is the bottom of your

heat sink. You're not bolting a slab of cast iron onto the side of a pot-bellied

stove, here; the tolerances in CPU-to-cooler mating are very small, and

it doesn't take much to introduce an unacceptable gap.

Flat thermal sensors are less than half a millimetre thick at the actual sensor point, and considerably thinner elsewhere, but that's still more than enough to seriously compromise the thermal contact between the CPU and the heat sink. Sure, you can fill the whole area up with thermal grease, but thermal grease isn't anything like as good at moving heat as direct contact. It's just a lot better than air. The purpose of the grease is to fill the air gaps, but those gaps should be microscopic.