Atomic I/O letters column #25

Originally published in Atomic: Maximum Power Computing Reprinted here 23-Sep-2003.Last modified 16-Jan-2015.

Woozy gamer

I'm a typical gamer who really enjoys my gaming, however I get a massive headache (my friends call it motion sickness) after playing 15-30 minutes of games like Unreal Tournament 2003, Quake 3, Doom, or Jedi Outcast, which require 360 degree eye movement. However, when I play favorites such as Virtua Tennis, Dynamite Cop, or any of the Street Fighter series, I can play for hours with no headache. Is there something wrong with me, and if there is, what can I do to fix it?

Stephen

Answer:

You've got "simulator sickness", and it's quite common, though triggers

and symptoms vary.

Basically, motion sickness of all kinds - car sickness, sea sickness, air sickness, simulator sickness - is caused by input to the brain that doesn't add up. Usually, it's because your view of the world is changing orientation, but your inner ear and proprioceptive senses (the senses that tell you where your body parts are) tell you you're not moving, or at least not moving in the same way as the view. The result is usually nausea, but headaches are quite common as well.

Simulator sickness - which some people called "DOOM Induced Motion Sickness", or DIMS, when it first hit the news - usually comes from perceiving a moving world on your monitor, but not actually moving. Apparently, some people get it only when there's significant lag between control inputs and movement. Some people get it only when the frame rate's low. Some people only get it from games with a view that bobs as you move. Some people even reckon DirectX makes them sick but not OpenGL.

Quite a lot of simulator sickness sufferers seem to get it when they first start playing FPS games, then get over it quickly. Some, like you, don't.

There are things you can try that may help. Basically, you want to give your brain more visual stimuli that tell it you're not moving. If you play in a dark room, light it up; have plenty of obviously stationary visible stuff around the monitor. If you have a huge monitor and/or sit very close to the screen, move back, so the moving image takes up less of your field of view.

There are a couple of papers on simulator sickness here and here, in case you're interested.

Decorative metal

In my quest to pump out as much performance as possible from my system I have been looking at getting video RAM sinks, but remember that the bible states that they would not do much.

Why is this so? Surely having RAM sinks sitting on my video memory with a case fan blowing a breeze over them from outside the box would help me push a few more cycles and put a bigger smile on my face. I am new to overclocking, but cannot see how this couldn't help. I understand it wont let my Radeon 9500 Pro outshine every other card on and off the market, but it should be able to make it a little bit better.

Brett

RAM heat sinks may look great, but they don't do much.

Answer:

RAM sinks would help, a bit. A very little bit. The clock speed ceiling

difference is likely to only be a few per cent, and that'll barely even

add up to anything measurable in any real world test. X per cent more RAM

speed does not give you X per cent more system speed.

Putting RAM sinks on video card memory is more sensible than putting them on system memory, because video RAM is clocked faster and runs hotter. Even high-clocked video card RAM isn't at all likely to manage more than 10% higher clock speeds with heat sinks than it does without, though.

For reference, a quite pessimistic estimate of the heat output from the memory on an 8-DDR-RAM-chip, 128Mb video card that's being heavily used is about seven watts. That's from all of the chips put together; each individual chip will be emitting less than a watt. Possibly a lot less.

Less than a watt per chip simply doesn't make them very warm; there's not much heat that needs to be gotten rid of. The difference between just blowing a breeze over the RAM, and adding heat sinks to the chips and then blowing the same amount of air over it, closely approaches zero - particularly if you attach the sinks the way most people do, with thermal tape that may be easy to remove (compared with metal-filled epoxy, anyway...) but doesn't have very good thermal conductivity. Naked RAM chips that're exposed directly to the airflow may well be just as well cooled as chips that have heat sinks stuck to them with tape.

Why do lots of video cards come with heat sinks on the RAM, then? Well, a few per cent is a few per cent, and heat sinks allow the RAM a little more thermal leeway, so the card will be a little more stable at stock speed in a poorly ventilated PC.

The main reason for the RAM sinks, though, is cosmetic. They look cool. That's about it.

Chilly storage

I have been reading on the tech forums, and I have heard people say that if your HDD is dead, pop it in the freezer for half an hour and it should work for long enough to get your files off. Do you know how this works? I have tried it and it does work, but am puzzled about why.

Duncan

Answer:

Freezing isn't a miracle cure, but yes, it can bring some dead drives back

for a brief period. Theories vary on why this works - differential contraction

freeing up bearings, contracting platters increasing head clearance - but

it indubitably, sometimes, does.

If you take an ice-cold drive out of the freezer, it'll shortly be covered, inside and out, with condensation. Water droplets on drive platters are a Bad Thing. To avoid this problem, you can try attaching power and data cables to the drive and then putting it in a Zip Loc bag, sealing the opening as well as you can, so it's not got too much air from which water can condense. If you can manage it, you could also try running the power and data cables from the PC right into the freezer, and running the drive (which should at least be sitting on some newspaper or something, not on a bare ice-covered shelf...) while it's in there!

Peltier power

I have been building a PC with a Peltier device cooling a water system for some time, and recently had to do some wondering. I was told that an 80 watt Peltier (around 6.6 amps at 12 volts) would run off the PSU, when I had installed a six amp transformer to drive it. The transformer I installed is about eight times the size of the one in the PSU, and even the wiring in the PSU doesn’t seem able to handle 6 amps.

Although the PSU is rated at 300 watts, most of this is available to the 5 volt rail. Yet the PSU says that eight amps is available to the 12 volt rail. How can such high amperage be available from such a small transformer in the PSU, and will it run the Peltier device and the computer together?

Peter

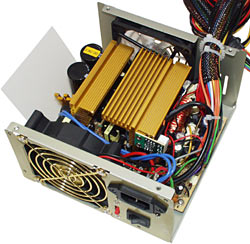

You'll find a lot of components inside a PC PSU, but a big transformer

won't be among them.

Answer:

They two power supplies look so different because they work in quite different

ways.

Your "transformer" is, I presume, actually a linear power supply, which contains a big transformer, but also has a bridge rectifier and a smoothing capacitor or three, and probably a voltage regulator as well. If it doesn't have a regulator, it'll deliver more volts when it's lightly loaded than when it's delivering its full capacity. If it's just a bare transformer, the most you can expect your Peltier device to do when connected to it is nothing.

Anyway, linear power supplies are simple to build and quite safe and don't emit any electromagnetic interference - because everything's running at power line frequency. But they're not very efficient, and they have to be big and heavy, because of that big transformer.

Your computer's PSU, on the other hand, is a switchmode power supply, which is a lot more complex than the linear supply. Its little transformer is a very high frequency device, which is why it can be so much smaller, and the whole PSU is much more efficient than the linear supply. It produces some electromagnetic interference, but you can't have everything.

By the way, the standard current rating for the 18AWG (American Wire Gauge) wire that most PSUs use for their drive power cables is nine amps.

If you had a very beefy PSU, then you could indeed run your Peltier from it; a 550 watt rated PSU, for instance, would probably be good for more than 20 amps on the 12 volt rail. Your current PSU isn't likely to be able to manage it, though.

Mystery tasks

I am running Windows 2000 Pro. When I open Task Manager and check the "Processes" tab, my computer is running "svchost.exe" four times and it's taking up a lot of the RAM (about 20Mb mem usage; I have 256Mb of RAM). There is also "vsmon.exe" running (I don't know what that is), and there's a "GameChannel.exe" running when I'm not even running a game, and when I tell it to end process it closes everything. Please help.

Dean

Answer:

Svchost.exe is a generic name for Windows services that run from dynamic-link

libraries. Multiple instances of it don't mean the same thing's running

more than once. Find more information about it, in Windows 2000,

here.

Don't worry about these services eating lots of your RAM; if they're running but not doing anything, then most or all of their assigned memory will have been paged out to the swap file, leaving your actual physical RAM free. You can see how much physical memory you have free in the Performance tab of Task Manager.

As for the other two - are you running the ZoneAlarm personal firewall? Vsmon is their "True Vector Internet Monitor" program.

GameChannel.exe is Wild Tangent's GameChannel, um, thing, which you may have got as part of a Logitech driver install. It's disable-able. Assuming I'm thinking about the same version that you have, you should be able to right-click the Wild Tangent icon in your System Tray, choose the About item, then click the Options tab, uncheck every box and click Apply and/or OK. Then go to Control Panel, open the "Wild Tangent Control Panel" item, uncheck the "Automatic Updater Service Enabled" box and click OK.

Silent speed

I've been experimenting with overclocking my CPU (Pentium 4 2.4GHz), and I eventually figured out that to ensure stability, I had to change my PCI/AGP frequency to suit the CPU Host Frequency. My default FSB is 133 MHz, with the PCI/AGP freq at 33/66. I've already determined what kind of frequency will allow my computer to start or not, and my Mobo automatically resets the CMOS if it takes more than 20 seconds to load the Memory Test screen.

Anyway, the point is, I found out that at a FSB frequency of 148MHz, the PCI/AGP has to be at 37/74 MHz. I set it like this via the BIOS interface, and when I started Windows, no sound played. I tried playing some music in Winamp, and it came up with an error message saying, basically, that no sound card was detected.

I re-installed the sound card drivers just to be sure, but to no avail. Why won't it detect the sound card, and is this problem fixable? I mean, I'd like to have both a CPU speed of 2.66 GHz and also my MP3s.

Alex

Answer:

Presumably, the problem arose because you've wound the PCI bus speed up

a bit, and the sound card doesn't work when it's clocked that high.

You don't mention what motherboard you have, but you seem to be saying that there's separate adjustment options for the CPU FSB and the PCI/AGP speed, but you're adjusting them as if they were locked together. You really ought not to have to do that; the separate adjustment options specifically exist so that you can change the FSB without discombobulating the rest of your PC.

Anyway, assuming you do need to use the higher PCI/AGP speed, then if your sound card's an actual separate card (not built into the motherboard), installing it in a different slot might help, but probably won't. Changing to another sound card might solve the problem, too, but there's no guarantee that the new card would like the elevated bus speed any more than the old one did.

You've only overclocked your CPU by eleven per cent, here, anyway; that's not going to give you much of a performance difference. Sure, it's 266-odd megahertz more, but that isn't that much when you're starting from 2400MHz. So if you have to go back most or all of the way to stock speed, you won't actually be losing much.

Xtra Peeved by Athlon XP

I'm not sure if you've covered this topic previously, but I'll ask anyway: What kinds of tests do AMD run in order to come up with their XP rating conventions? I'm referring to the "real world performance" in comparison to an Intel processor of a higher clock speed, for instance the "Athlon XP 2600", which runs at 2.13GHz.

I own an Athlon XP 2600, and I find it consistently underperforms, although marginally, my friend's Intel 2.1GHz beast. Our machines are relatively similar (he built mine) so there shouldn't be too much variation on account of hardware specs. We run benchmark programs like 3DMark, and the frame rate counters in games. Looking through Atomic, I find that in some case your tests have come to the same conclusions.

Is it legal, or ethical, for AMD to claim that their processor can perform on par with a Intel 2.6GHz processor when it can barely match a 2.1GHz? If so, can I use these tests as evidence to get my money back on the processor?

Alex

Amazingly enough, the pretty graphs from AMD's marketing department

aren't a bunch of nonsense.

Answer:

Quick answer: No, the XP ratings aren't a rip-off. An Athlon XP with a rating

that matches some given P4 won't beat it for everything, but it should beat

it for most things, usually by a margin that's measurable but not particularly

noticeable.

Long answer: AMD's Athlon XP performance rating numbers are supported by a suite of tests that's stayed the same since they released the original Athlon XPs. That test suite isn't a particularly weird one; it includes a bunch of standard office, content creation and game benchmarks.

AMD also haven't just been comparing the speed of their newest Athlon XPs to the speed of the first XPs, and not to P4s, though that is what they've done many times as the Athlon's climbed the speed ladder. It'd be perfectly fine to only compare Athlons with Athlons if all the P4 lineup was doing was getting core speed increases, but the P4's had new core designs and bus speeds to boost its performance a bit more. A 3GHz P4 is more than twice as fast as a 1.5GHz one.

The Athlon's had new cores and a new bus speed too, though, and it turns out that it's pretty much maintained parity. All other things being equal, your XP 2600+ should be at least as fast as a 2.6GHz P4 machine.

All other things can't be equal, of course, since you can't run those two processors on the same motherboard. I can only presume that something else to do with your system - maybe hardware, maybe drivers - accounts for the difference between your system and your friend's.

The QuantiSpeed PR True Performance Initiative LongSillyName Rating isn't quite as straightforward as it seems, though, because the AMD benchmark suite is all system benchmarks, not CPU-only tests. That's sensible, but it means that other hardware components influence the results. That other hardware hasn't stayed the same since the first XPs were rated.

AMD seem to have made an effort to bury all of their old benchmark reports; go to their benchmarks page and it seems you'll only get the report for the flagship processor. Back when they tested the XP 2200+, though (the test data for which I had to poke around on the German-language part of the AMD site to find...), it was on a Gigabyte 7VRXP KT333 board with 256Mb PC2700 (DDR333) RAM and a GeForce4 Ti4600.

For the later XP 3000+ test, on the other hand, they used an Asus A7N8X Deluxe nForce2 board with 512Mb PC2700 RAM and a Radeon 9700. If they'd put the XP 2200+ on this board, it would have scored better.

So, to some extent, the XP ratings are based on extra hardware that has nothing to do with the CPU.

When AMD compared otherwise similar Athlon XP and P4 systems for a "Software Performance Guide", though - different motherboards, of course, but each with 512Mb PC2700 RAM and a Radeon 9700 - the overall score put the Athlon XP 2600+ on par with a 2.8GHz P4, and that's the kind of result that other testers have found, too.

(The Guide in question's downloadable in PDF format here.)

The P4 did a bit better in a few benchmarks - the Sysmark 2001 Internet Content Creation test, and a couple of the 3D game tests - but in no case did the 2.8GHz P4 win by more than a few per cent over the Athlon XP 2800+. And, of the 22 tests in the suite, 13 show a big enough win for the XP 2800+ over the 2.8GHz P4 (10% or more) that a normal user would have a decent chance of actually noticing.

Small CPU speed differences, in this situation, don't make much of a difference to the score. The 2.8GHz and 2.67GHz P4s, which differ by 5% in clock speed, only differed by 2% in overall score.

The Athlon XP 2600+ and 2800+ are almost as close in clock speed - they're within 6% of each other. They, however, differ by about 9% in AMD's overall performance results. This seemingly fishy result is accounted for by the 2800+'s higher 166/333MHz FSB speed. The 2700+ has the same higher speed; it scores higher than you'd expect from its core speed, too.

AMD's burying of their old benchmark data (try finding the Software Performance Guide from the AMD search page; I'll wait...) makes it look as if they've got something to hide, but the numbers don't actually seem to be particularly dodgy. Even the fact that the older benchmarks were audited by Arthur Andersen doesn't seem to have polluted them.